To our gen-next — those in College or those who would have completed a few years back — consider being an HR consultant for both Productivity & Welfare — questions on how to measure human trustworthiness and mitigating tipping points, so as to ensure both, a Risk free approach for the Plant and also for the Human Operator — be a leader in fusing modern systems thinking through data- & analysis- driven forecasting methodologies.

___________________________________________________________________________________________________________________________________________________

A Quantitative Approach towards Assessment of Human Trustworthiness & Work-Performance, in Critical Industries :

1.0 Introduction :

1.1 While a concept like trustworthiness is a very subjective paradigm, studies have been undertaken to evolve a parametric understanding of the behavior of a human operator, particularly those associated with critical responsibilities, e.g., nuclear plant operation and maintenance. Such parametric studies may not be very accurate, but can provide an initial symptomatic understanding of a person’s normal behavioral pattern vis-à-vis noticable deviations and thereby can offer (albeit with a degree of uncertainty) a possible prediction w.r.t. sudden changes in his mental framework. This could be a temporary phenomenon, possibly induced due to individual health issues or emotional issues, and could very well be reversible. However, for the given period of time when the human operator is not in his complete normal behavioral state, it would be best to ensure that he/she does not carry out any critical function in the plant.

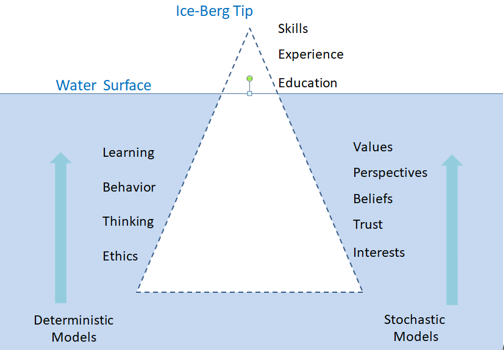

1.2 The iceberg model is a powerful metaphor and tool used in systems thinking to help us understand the different levels of a complex system or problem. Just like an iceberg, it suggests that what we see on the surface (the events) is only a small part of the whole story. In the iceberg model, above the waterline lies everything that is visible or observable. This includes all forms of (inter)action, such as behavior, skills, and knowledge of an individual. In organizations, this also applies to job descriptions, organizational structure, and work procedures as visible elements. “The Hidden Depths of the Iceberg,” is based on the idea that our awareness and understanding of a situation or a person is often incomplete [2,6].

1.3 Thus, the Iceberg Model suggests that in order to truly understand people’s behaviors and motivations, we must look beyond surface-level observations [1,2,3,4]. By recognizing the existence and influence of the unconscious mind, we can gain a deeper understanding of ourselves and others. This awareness can lead to personal growth, improved relationships, and more comprehensive problem-solving. While Iceberg Models have been widely used in various fields, sociology, communication studies, and management, in business and leadership, understanding the iceberg concept can aid in effective team dynamics and identifying persons for leadership roles.

1.4 Using the above iceberg-model-based evaluation of employees, which has evolved into standard methods for recruitment of persons needing to play leadership roles [2], an approach of using math–stat techniques, for assessment of trustworthiness of human operators (especially in critical industries and other critical infra projects), is being attempted here and is expected to be an active area of research. The basic premise is to consider the human operator dynamics as a linear time-invariant system, and to model it in a state–space framework with an uncertainty component.

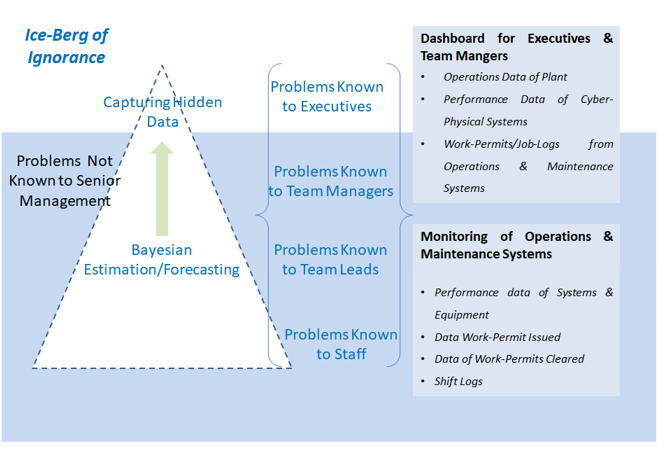

2.0 Formulating a Dynamic Model (Difference (Discrete) Equation Model), for a human operator (with appropriate skill-sets)

2.1 Considering the various attributes — physiological/psychological/cognitive, etc. of a human operator, we need to choose the order of a dynamic model which captures all the attributes. Then we estimate the attribute parameters, using appropriate math–stat algorithms, which when incorporated in the dynamic model construct, it becomes a reasonably close representative of the model of the human operator. In the iceberg approach, the visible attributes are available or measurable and the method is to estimate the hidden parameters (attributes) through a dynamic system solver and a Bayesian solver. Besides other methods, such a concept of capturing the hidden parameters and their nuances requires effective psycho-analysis evaluation coupled with the limited data captured from an individual’s earlier records (academic/extra-curricular/character/behavior-at-school/behavior-at-home/behavior-in-public, etc.) Such an approach requires a multi-disciplinary expertise, w.r.t. psycho-analysis guidelines, HR practices, data analytics, etc., so as to develop a questionnaire, in order to probe into “the below water level” attributes (behavioral, knowledge, beliefs, self-image, drive-motive, personality, etc. – as considered in the basic concepts listed above) of a human operator. The figure below gives an overview of the iceberg concept and what one is looking for, in order to do a close assessment of an individual, essentially with the idea of ascertaining the fitness of purpose, for a particular job/assignment/function [11].

2.2 By appropriately connecting the answers (of the questionnaire) to the above-water-level, visible functions (viz. skill-sets, experience, academic-records, etc.), the various attributes which are not visible, may be understood. The design of a questionnaire is to effectively form both the inputs (signals), to the proposed auto-regressive moving-average model with exogenous inputs (ARMAX) of an expected model of a human operator, and also the control input matrix. Similarly the answers to the questionnaire are mapped to obtain the output vector (outcomes) and the relationship matrix. With a large input–output data set, the coefficient vector of the ARMAX model (human characterization parameters), may be obtained through a control theoretic algorithm, termed as ‘System Identification’. The Difference Equation (ARMAX model) thus obtained, may now be considered for obtaining the state equation (defining the human dynamics) and the measurement equation (defining the outcomes).

3.0 Bayesian Estimation for Assessment of the Trustworthiness of the Human Operator :

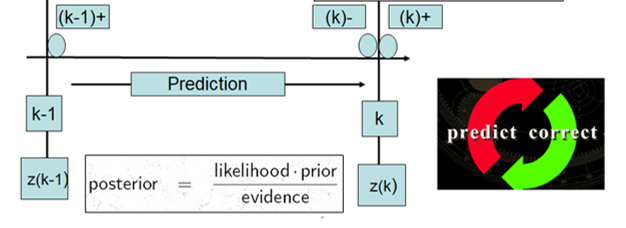

3.1 Having obtained the state equation and measurement equation, based on the system identification approach, mentioned above, a Bayesian estimation approach may be considered. Such an approach uses a predictor–corrector estimation algorithm for estimation of the state vector, wherein the non-measurable states effectively represent the attributes below the water level. Based on the state–space model of the human operator, formed from the initial set of questions and answers, followed by the dynamics solver, the initial priors are fixed (attributes and error matrices due to uncertainty in modelling).

3.2 With the help of a further set of questions (part-2 of the questionnaire), the dynamics are time-marched, likelihood functions (based on the answers at each time instant k) compared, and the posterior obtained. Once the posterior state converges and the posterior covariance is minimized, these can be considered to be the best understanding of the attributes below the water level.

As a start, use of standard Kalman filter is appropriate, if the system dynamics are considered to be linear and time invariant The state noise and measurement noise may be fixed, apriori, based on the attributes seen above the water. The figure above gives an overview of the approach explained above [8]. The time samples are obtained at k-1, k, …. And a recursive equation is formulated for obtaining the posterior [8].

4.0 Need for Understanding the Human Attributes — Mitigation of a Tipping Point in Individuals

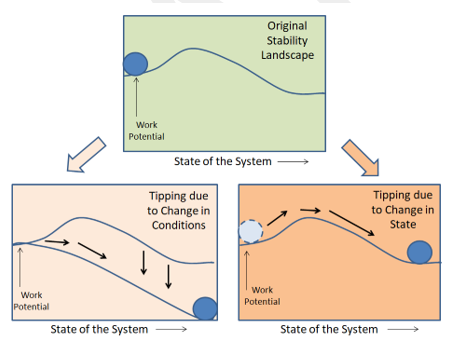

4.1 In the dynamical system domain, there are three main ways in which sudden transitions can occur in non-linear systems [9], which may very well be applied to the human operator dynamics — this pre-supposes that the human operator has the requisite skill-sets, experience, and strong work-ethics. One of the commonly observed situations is when one or more of the system’s parameters change and reach critical values causing a sudden shift or bifurcation in the state of the system to a new state that becomes stable, and this is referred to as bifurcation-induced tipping. The second type of transition occurs due to perturbations that force the system to tip from one state to another. This occurs in a multi-stable system that has two or more stable states that co-exist for the same set of system parameters, and this is referred to as noise-induced tipping.

4.2 The figure below is a representative suggestion w.r.t. how a tipping point is initiated due to either a change in conditions or a change in state. While these paradigms may have more than one mathematical interpretation, in a control theoretic framework, they could be considered to be change in parameters or constraints of a governing ODE (change in conditions) or change in state variables (change in state) in systems modeled in state–space form.

4.2.1 Tipping Point w.r.t. Task Execution by the Operator :

a) Change in Conditions may be interpreted as a change in operating regime (e.g.. a rare stormy condition in the high seas for a sea captain, severe turbulence mid-air for a pilot, multiple transients in a power station or any process plant, etc.) than which the human-operator had earlier been exposed to. Such a change in scenario (condition), may result in the operator making a major error in a standard operating procedure (SOP).

b) Change in State may be interpreted as the operator finding a change in the system configuration than what he has been prepared or trained to attend — by configuration change it is meant that the adjoining/inter-connected systems or equipment or controls are differently configured than what has been recorded in the control logs. Since such situations/scenarios are rare, this may result in the operator making a major error of judgment in correctly fixing the system-specific problem.

The probability of error of judgment or confusion of the human operator, leading to a major maintenance or set-point error or configuration discrepancy of critical Systems Structures & Equipment (SSE), having the propensity of a jeopardizing the plant, can be considered as a tipping point, of a trained human operator, w.r.t. a failure to carry out the specific task assigned or inability to undertake the task.

4.2.2 Tipping Point w.r.t Physical/Mental Framework of Operator :

a) Change in Condition could be a change in team-members (affecting team-work), management structure (affecting recognition, remuneration, etc.), procedural compliances, etc. resulting in the human operator considering a particular task execution having a conflict of interest or against the standard protocols followed earlier, which he/she perceives as a safety violation, — in this case the operator may be unable to undertake an assigned job or fail to execute the job.

b) Change in State, wherein the original SSE may be replaced with a technology upgrade (e.g., electrical systems to computer based, display systems to menu-driven operator consoles, etc.), resulting in the operator getting to feel that he/she is becoming irrelevant (w.r.t. his/her original skill-set) — in such a case the operator may fail to undertake the job.

An attempt at understanding how to model a human operator, by linking his skill-sets and motivational aspects, in terms of stochastic ODEs/PDEs, is underway. Evolving the various governing equations of a human operator, in terms of physiological/psychological/cognitive attributes requires a wide body of knowledge which needs a multi-disciplinary research.

5.0 The Iceberg Model for Ensuring Information Percolation from Field to Management :

5.1 Further to the trustworthiness assessment problem, every plant has to ensure effective information flow w.r.t. the various activities carried out on an SSE (especially safety related ones), all the way to be registered with the top management. This ensures that there exists no gaps in either the safety or configuration status of any of the SSEs [12].

5.2 In most large industrial complexes, very often, supposedly trivial (as perceived/considered by the field personnel) operation/maintenance actions of systems/equipment, are either deliberately or un-intentionally not reported to the higher management. While filtering of information flow, at every level from the field personnel to the top management, is a necessary protocol, such filtering process should ensure that no vital safety/availability information pertaining to the field gets omitted. Such omissions may result in improper safety/performance planning/forecasting and thereby may result in lowering the overall plant safety levels.

5.3 In a data management framework, such an issue of missing information flow may be considered similar to a missing-data problem. By effective review of the various work-permit/job-completion statuses, which get recorded in standard computerized Operations & Maintenance Management data repositories, coupled with the plant operational data and System-specific on-line performance/availability data, many missing information aspects may be captured.

By a similar iceberg model, as shown in the figure below, a Bayesian forecasting method, by a predictor–corrector approach, may be considered.

6.0 Conclusion :

The field of human trustworthiness assessment is an advanced HR-related approach. It may be argued that such an approach tends to have a conflict with the privacy policy of personnel. However, owing to the growing complexities in industrial layouts (which are inter-twined with multiple layers of Cyber–Physical Systems), and where, progressively, a lot of field works are being relegated to either autonomous or semi-autonomous procedures, such assessments are considered necessary due to the extremely critical roles played by human operator, w.r.t. the ultimate safety.

******************

References & Further Reading :

- Christian A. Mahringer, et al., “The Iceberg Model of Change: A taxonomy differentiating approaches to change”, Heliyon, Volume 11, Issue 2, 30 January 2025, e41952

- Mohan Sripada, HR Professional & Leadership Coach, “The Iceberg Model Concepts for Hiring CEOs of Corporates”, Personal Communications

- Kallol Roy, “A System Theoretic Framework for Modelling Human Attributes, Human Machine Interface and Cybernetics : A Safety Paradigm in Large Industries and Projects”, Chapter-14, Springer Series in Risk, Reliability & Safety Engg., 2023, Springer Nature, Singapore, 2023;

- “A Systems Thinking Model : The Ice-berg”, Article from Northwest Earth Institute

- “Iceberg Model : A Simplified Psychology Guide”, Open source introduction On the Internet

- “The Iceberg Model of David McClelland”, 19/07/2024, Open Source article on the Internet

- Shankar Subramanian, “The Iceberg Model of Behaviour: A Vital Framework for Leaders”, Linked-in profile

- Peter S. Maybeck, Stochastic models, estimation, and control, Vol. 1, , Academic press, 1979

- Strogatz, Steven H, Non-Linear Dynamics & Chaos, Levant Books.

- Swaminathan Natarajan, Retd. Chief Scientist, TCS; Personal Communications

- Kallol Roy, “The New Perceptions of HRP in the Evolving Framework of Industry 4.0 and Industry-5.0 and the Growth of Cyber Physical Systems”, to be published, Springer Series in Risk, Reliability & Safety Engg., —, Springer Nature, Singapore, —

- Kallol Roy, “Modelling of a Computerized Operation & Maintenance Package and Experiences in its use at Work”, not published.

For more information on suggested further reading and for any discussions related to the topics discussed in the article, the author may be contacted through Kattangal Chimes. Please write to kattangalchimes@gmail.com